Blog

The Key Aspect of Problem Framing in Building AI & ML Applications

As we have mentioned in our ML & AI: From Problem Framing to Integration, problem framing is crucial because a well-defined problem sets the direction for the entire AI project, influencing data collection, model selection, and the overall success of the solution.

In this blog, we will expand on the question framework we used in the problem-framing phase with sample answers for our Tanoto project.

Key considerations for Problem framing

Project Motivation

What is the problem you want to solve?

- The first crucial step in any AI/ML project is to clearly define the problem you want to solve. A clear vision of the project also gives your team motivation and values the importance of the project.

Problem Definition

This involves identifying the specific issue, understanding its context, and outlining the desired outcomes. This step will help you to get the right strategy goal and avoid any overcomplexity.

What specific output do you want to predict?

- The clarity gained from defining the specific output is essential for making informed decisions about data collection, model selection, and evaluation metrics. Different outputs may require distinct approaches, and having a precise target allows for a more tailored and effective development strategy.

What input do you have?

- Focus on understanding the nature, source, and characteristics of the data that will be fed into the AI system because it may affect how your model is used for learning and making predictions.

How many training samples can you provide?

- If you are building your dataset, how many training samples will the dataset have? You can also add more samples by crawling the internet or checking public datasets.

Performance Measurement

Are there reference solutions, like a rival company’s products or research papers?

- This step provides a broader perspective and context for the AI development process. It is a strategic step that encourages learning from the successes and failures of others, fostering innovation, efficiency, and the delivery of high-quality solutions.

Do you have a benchmark?

- A benchmark could be a well-established industry standard, a past performance metric, or even a comparable project. The key is to select a benchmark that aligns with the nuances of your particular situation, providing a clear and meaningful basis for evaluation. This way, you not only track your performance but also gain valuable insights into areas for improvement and potential refinements.

What evaluation metrics are you using?

- The choice of evaluation metrics varies depending on the specific problem we're tackling. We customize our metrics to align with the objectives and complexities of each task.

What is the minimum level of metrics you expect?

- The minimum level of metrics you expect will decide your tuning strategy and it depends on what matters to you.

What would a perfect solution look like?

- This question will provide direction, set standards, and guide the AI development process.

Timeline

Are there deadlines to be aware of?

- This helps maintain focus, manage resources efficiently, and align the project with broader business objectives.

When can you provide the first result / when will the customer expect the first result / final solution?

- The quicker you provide the first result, the faster you can realize potential challenges, and engage the customer throughout the process to ensure alignment with expectations and requirements.

Setup team

Who will be involved? The best backup team, who is your domain expert?

- Make it clear who your engineers can turn to. Domain experts can maintain a feedback loop with the development team, continually providing insights and guidance throughout the app development process.

What technologies can be used? Will our team need to learn new skills?

- This is essential for making informed decisions, planning resource allocation, and proactively addressing potential challenges related to technology adoption and skill development in the project.

Problem framing sample with Tanoto

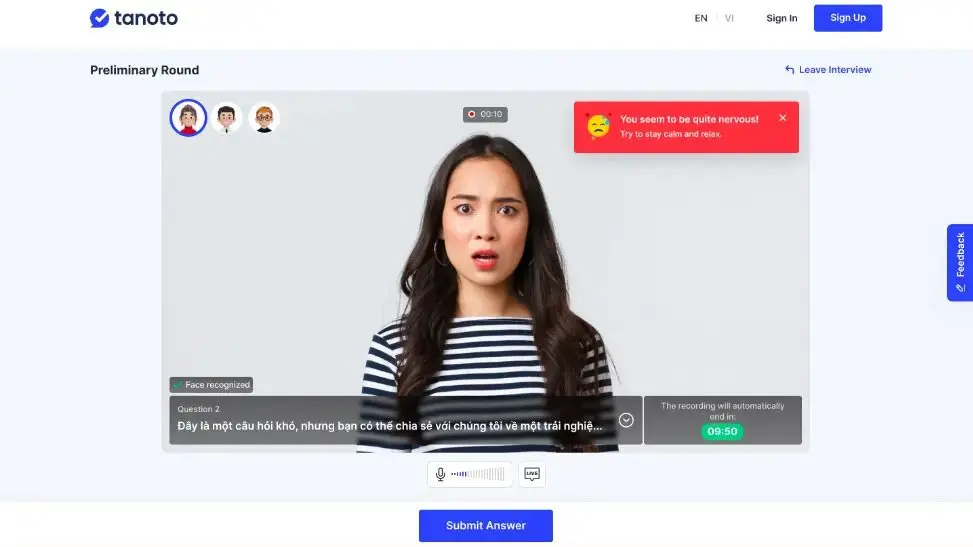

In this section, we will discover how Tanoto’s AI interviewer was made at CodeLink. Tanoto application replicate an online interview experience with an AI interviewer which can easily be accessed by user who wants to improve their interview skill.

Project Motivation

First, we have to clear the application’s motivation. We found out that non-verbal expressions during communication are as significant as spoken words. So we want to make recommendations and advice based on the emotions displayed in facial expressions during the interview with an expression classification.

Project Definition

Now we define our input and output. The output of the model will be one facial expression the interviewee is showing. The list of expressions will include happiness, fear, anger, disgust, surprise, sadness, and neutral.

The input will be a frame of the webcam feed showing the user’s face. Facial expression is a very common problem so there will be a lot of public datasets, and for this example,Blog post 1: Problem Framing 6 we use FER2013 dataset.

The model will receive an image frame, and then a square box containing a single face is extracted using face detection and segmentation. This is then fed into an image classifier to determine an emotion label.

Performance Measurement

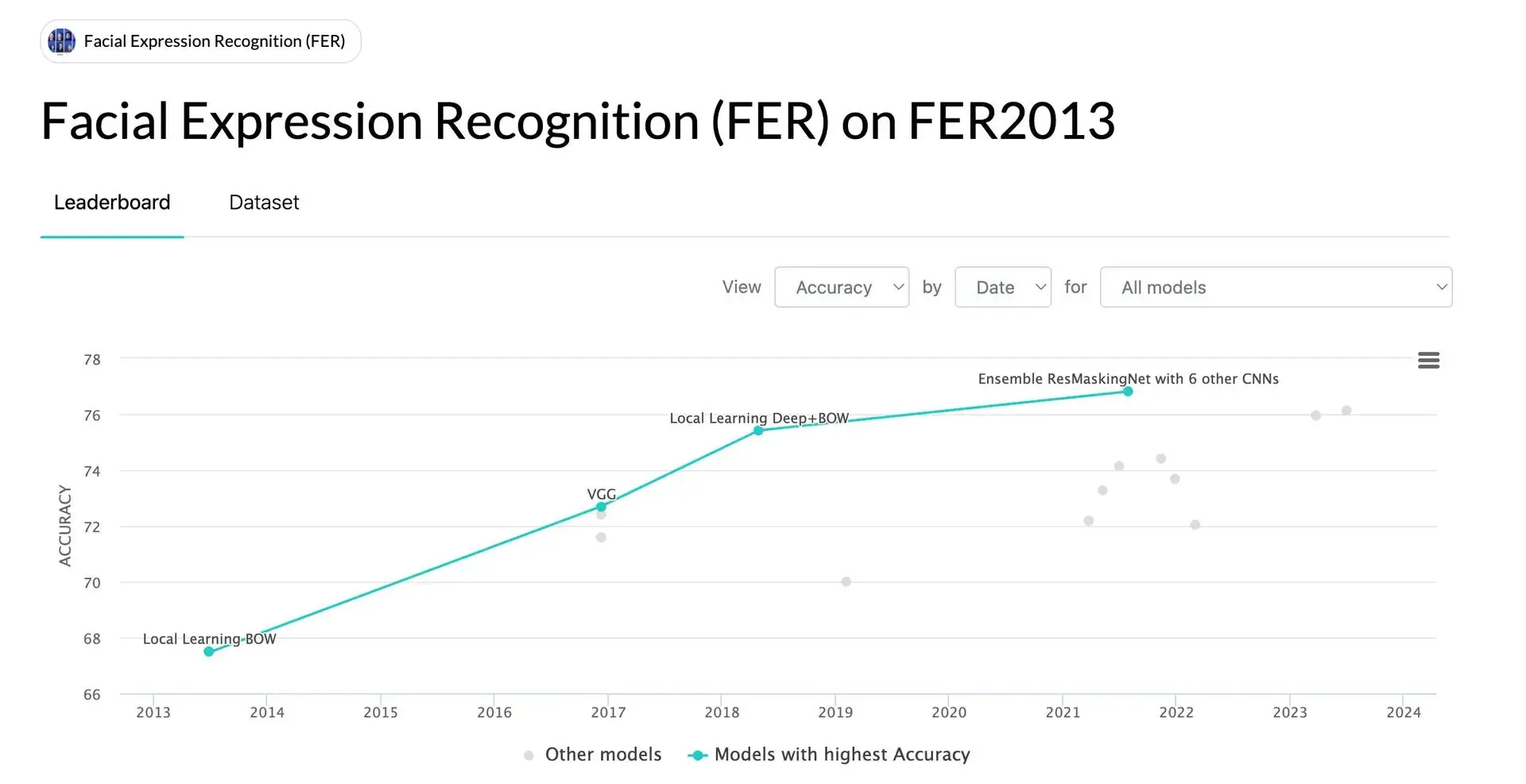

Since we're working with a public dataset, we've got a handy benchmark to guide us. Head over to Paperswithcode.com to check dataset benchmarks. As of when we're writing this post, the FER2013 benchmark has a top accuracy of 76.826%. So, we've set our sights on hitting a minimum accuracy of 70%, and we're also fine-tuning other evaluation metrics like precision, recall, and F1 score.

Setup team

We organize a team with:

- Full-stack Web Developers to handle our website with NestJS and NextJS.

- AI Engineers develop a face emotion classification model and then convert the model to run on the web using TensorFLowJS. Our Full-stack Web Developer and AI Engineer can work together to implement TensorFlow MediaPipe for face detection.

- DevOps to take care of authentication, deployment, and hosting.

Our Full-stack Web Developers haven’t worked on face detection projects, but they have skills in JavaScript, so they can easily handle face detection with TensorFlow MediaPipe. As for our AI Engineers, they have to learn basic JavaScript in less than a week to help Full-stack Web Developers integrate the TFJS model into the website.

Timeline

The project was set for 3 months. We divided it into several 2-week sprints. After each sprint, the development team should deliver new changes into the test environment for others to test out.

Conclusion

Defining the problem upfront is like choosing your destination. It shapes everything from the look and feel of the app to how it solves real user issues. It influences decisions on what features to include, how to collect data, and what tech to use.

So, if you're ever wondering why problem framing is a big deal, remember: it's the difference between a road trip with a clear destination and one with endless detours. Choose wisely, and happy app building!