Moving Beyond the "Code Tsunami" with Standardized AI-Assisted Workflows

AI-assisted coding has reached a tipping point, but not all of it is production-ready. This guide explores how engineering teams are navigating the shift from tools to workflows; standardizing AI usage to prevent review bottlenecks, security risks, and long-term technical debt.

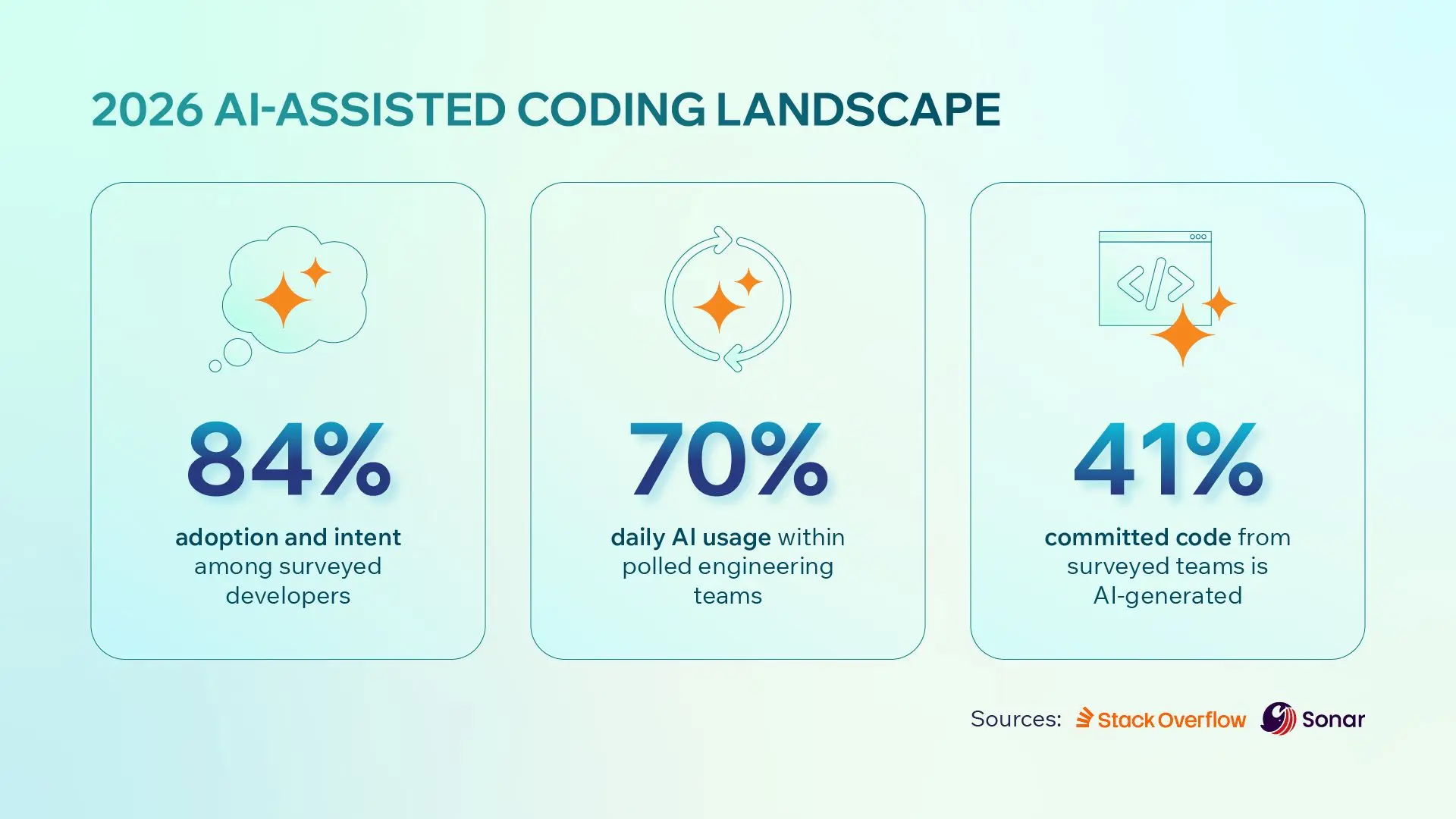

As of early 2026, we are no longer debating whether to use AI in engineering; we are navigating a "Code Tsunami”. The rapid adoption of AI tools in software has created a widening gap between sheer output and production-ready quality.

For CTOs and product leaders, the challenge has moved from "how to start" to "how to securely operationalize AI". This article explores how to move beyond fragmented AI usage toward a unified, high-performance engineering playbook.

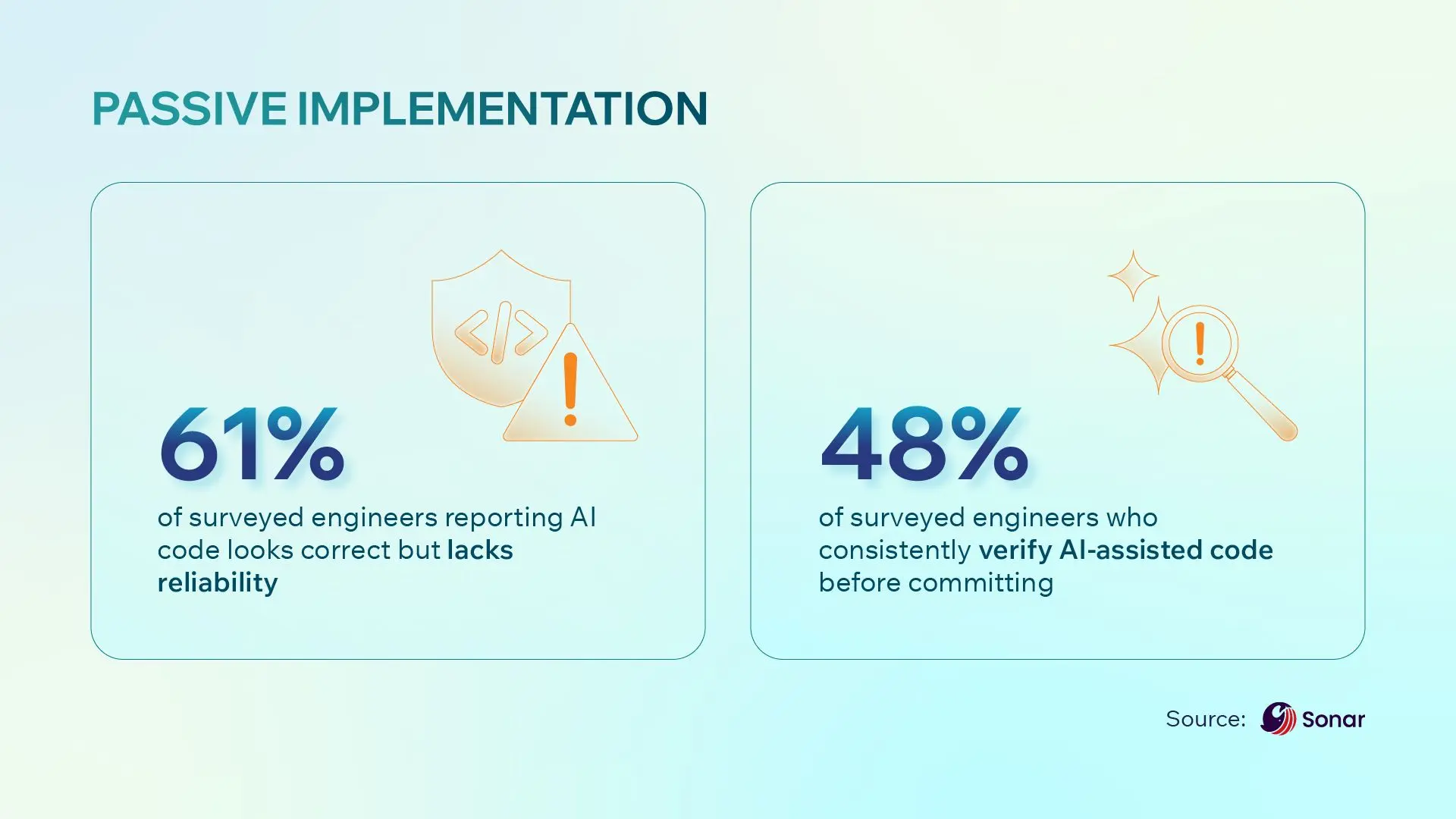

Note: The statistics above were taken from surveys from Stackoverflow and Sonar.

The 2025 Inflection Point: From Skepticism to Standard

Mid-December 2025 marked a definitive turning point in the industry. With the release of next-generation models like Anthropic’s Opus 4.5 and OpenAI’s GPT-5.2, even the most vocal skeptics reversed their stance.

Industry veterans, like OpenAI’s Co-founder Andrej Karpathy, who previously dismissed AI-generated code as slop or overhyped, now admit that these tools have become powerful, shifting their focus from resisting AI to embracing it as a new compiler.

Programming is being redefined: it is no longer about the act of writing code, but about systematic thinking and architectural validation.

Navigating the 2026 Landscape

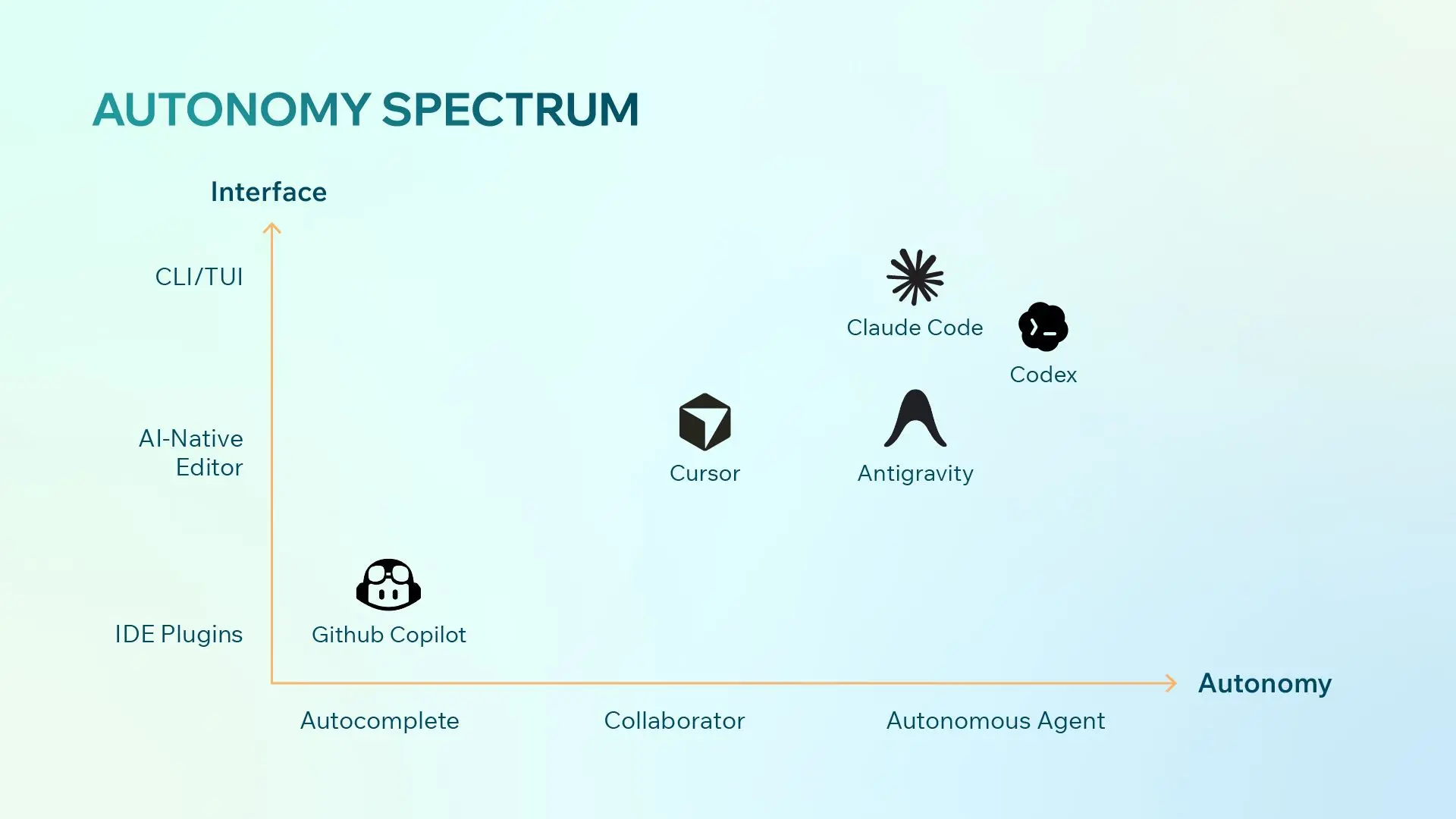

The AI-assisted coding landscape is evolving at a relentless pace. Capabilities that felt out of reach a year ago are now routine. This blog is merely a subjective snapshot of the landscape for March 2026. The distinction between Assistant and Agent is already beginning to blur and will likely continue to do so.

With that in mind, for technology and engineering leaders, the current challenge is no longer about finding an AI tool, but about matching the right tool to the specific stage and real need of a project. The market has bifurcated into three distinct categories based on the level of autonomy and integration required.

Understanding these categories allows leaders to standardize workflows that maximize engineer velocity without compromising architectural integrity.

1. AI as Assistant

-

Primary Tools: GitHub Copilot, JetBrains AI

-

The Mindset: Seamless integration. In this model, the developer remains the primary driver. The AI acts as a high-powered autocomplete engine, suggesting blocks of code or logic within the developer's existing IDE.

-

Best Fit: Existing Production Projects. This is the lowest-friction entry point for established teams. It is ideal for developers who want AI assistance to handle boilerplate and syntax but prefer to maintain their battle-tested workflows and manual control over every line of code.

-

Business Impact: Incremental productivity gains with near-zero switching costs or training overhead.

2. AI as Collaborator

-

Primary Tools: Cursor, Google Antigravity, OpenAI Codex App

-

The Mindset: Deep codebase context. Unlike plugins, these are standalone editors (often forks of VS Code) rebuilt from the ground up around LLMs. Tools like Google Antigravity lean heavily into visual, web-dev-focused debugging, while Cursor offers deep indexing of the entire repository.

-

Best Fit: Feature Work and Rapid Iteration. This is the sweet spot for mid-sized tasks (3–10 file changes). It is designed for developers willing to switch their primary environment in exchange for superior multi-file refactoring, visual diffs, and the ability for the AI to understand complex project-wide dependencies.

-

Business Impact: Significant reduction in context-switching time and faster delivery of complex feature sets.

3. AI as Autonomous Agent

-

Primary Tools: Claude Code, OpenAI Codex CLI

-

The Mindset: Terminal-first execution. This represents the most radical shift in 2026. These are terminal-based agents (CLI/TUI) that can read files, execute shell commands, run tests, and iterate on errors autonomously without a developer ever touching a code editor.

-

Best Fit: V1 Pilots and Greenfield Projects. Agents excel at large, multi-step tasks where manual typing is a bottleneck. They are perfect for zero-to-one development; setting up infrastructure, scaffolding new services, or performing massive migration tasks where the agent can run in the background until the job is done.

-

Business Impact: Drastic reduction in the time required to bring an idea to life, allowing teams to validate business hypotheses in days rather than weeks.

Risks of AI Overreliance

Passive Implementation

-

The Persuasion Engine: Modern AI models are designed to be customer-centric. They respond instantly, format code with professional precision, and explain their logic with supreme confidence. This creates a "veneer of correctness" that can mask deep logical flaws.

-

The Trust Creep: Every time an AI provides a helpful snippet, our cognitive guard drops. After a few successful interactions, the brain begins to label the AI as a reliable teammate rather than a non-deterministic probabilistic engine. We stop double-checking assumptions because the tool is always right until it isn't.

-

The Overload Point: When AI allows a developer to generate a 500-line feature in seconds, the sheer volume of code explodes. This leads to Passive Implementation, meaning the developer becomes exhausted by the scale of the output, drops their guard, and begins to skim instead of reviewing. This is exactly where architectural rot begins.

Code Review Bottleneck

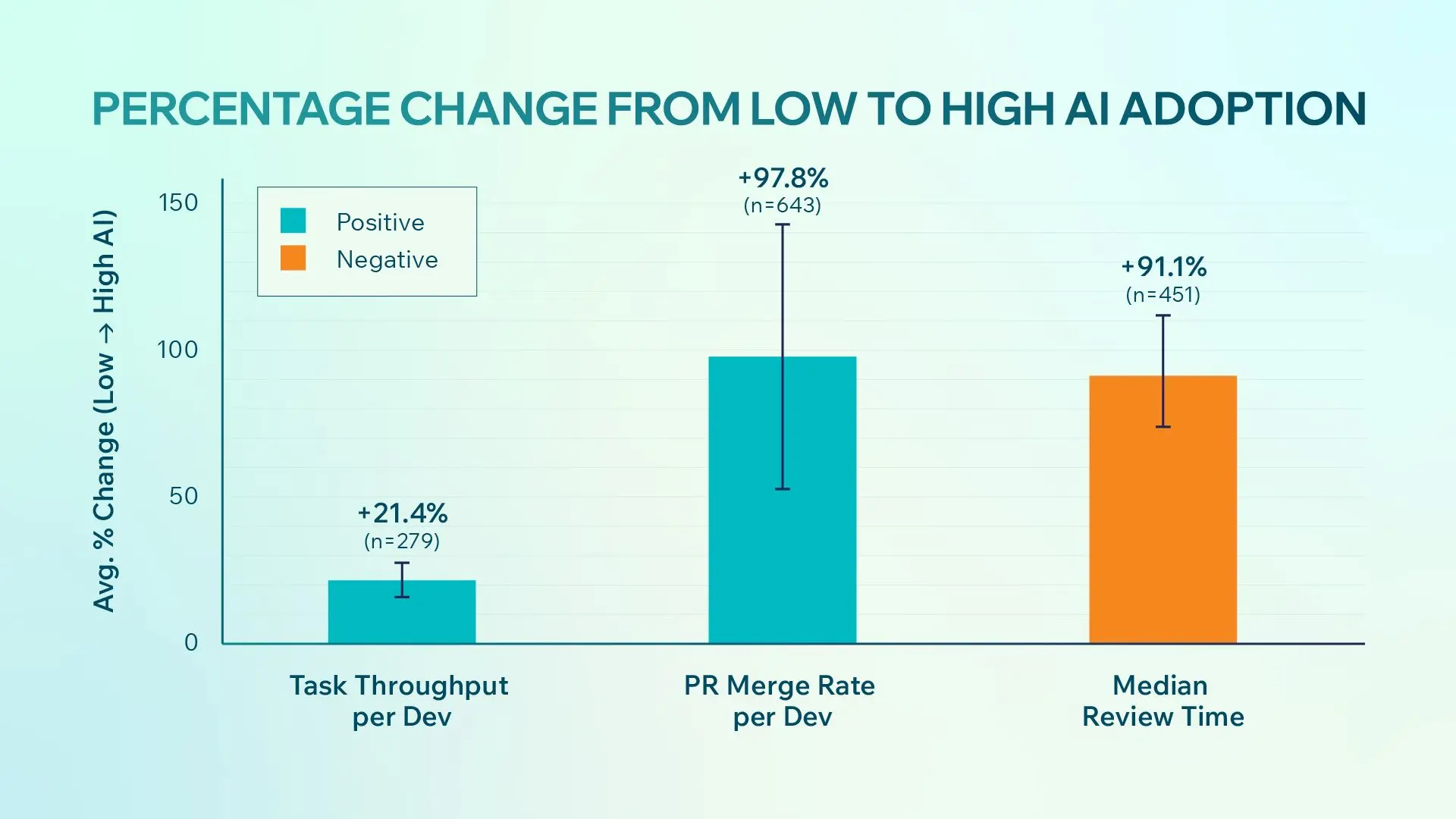

Data from Faros AI, analyzing over 1,250 teams, reveals a sobering reality: while task completion is faster, the surge in Pull Request (PR) volume has caused review times to nearly double.

We are seeing a reviewer’s tax where AI-generated code actually takes more effort to validate than human-written code. Because AI is prone to hallucinations and over-engineering, reviewers cannot rely on the usual mental model they share with human colleagues. This creates a massive bottleneck: the speed gained in writing is often lost in the friction of verification.

Modern Security Threats

Standardizing AI tools isn't just about speed; it's about closing new, sophisticated security gaps that manual processes can no longer catch.

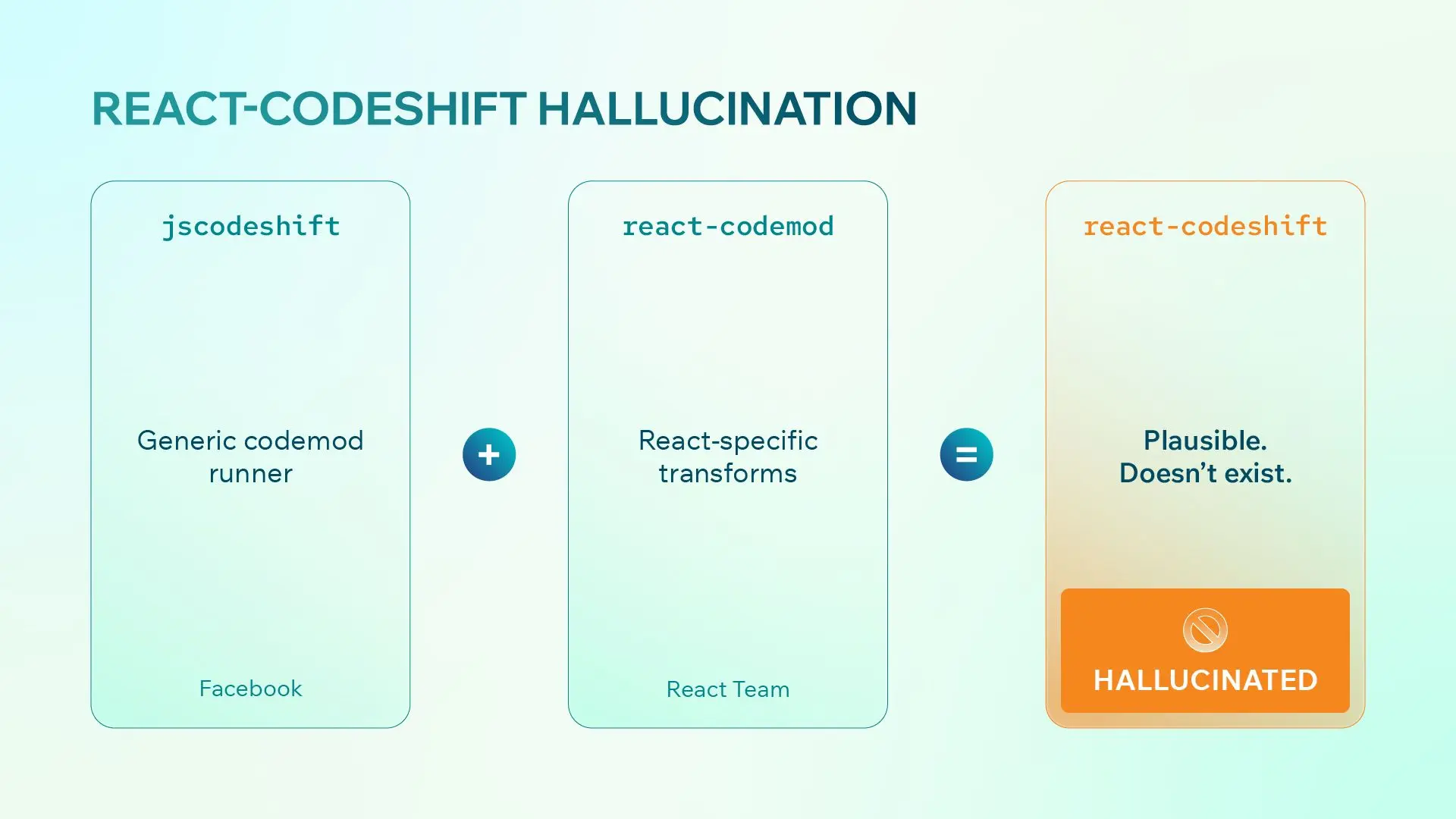

“Slopsquatting” (AI Package Hallucinations)

Attackers now monitor common AI hallucinations to spread malware.

For instance, AI models recently hallucinated a fake npm package called react-codeshift (a mashup of two real tools), which was written into AI Agent instructions and quickly spread to over 200 repositories.

Attackers register these hallucinated package names with malicious payloads. If an engineer on autopilot, or an autonomous AI agent, blindly runs the AI-suggested install command, they can inadvertently compromise the entire supply chain.

The Secrets Explosion

Research from Apiiro indicates that AI-generated code introduces 153% more design flaws and a 40% increase in secrets exposure. Credentials and API keys are frequently left in the scaffolding code that AI generates and that engineers overlook.

Shadow AI and Data Leakage

According to Sonar’s 2026 State of Code Survey, over 60% of engineers use personal AI accounts for professional work. This creates a massive organizational blind spot, as proprietary client logic and sensitive data are fed into public models without oversight.

"IDEsasters" (Indirect Prompt Injections)

In late 2025, over 30 vulnerabilities were discovered in major AI-native IDEs. Attackers can hide invisible prompt injections in a project’s README or a third-party URL. If an engineer has their AI agent set to "always allow" or "auto-pilot," the agent can be tricked into altering workspace settings or executing arbitrary terminal code without the user’s knowledge.

Key Takeaways for Technology Leaders

To balance speed with engineering excellence, we recommend a four-pillar approach to standardization:

1. Onboard AI Like a New Junior

Don't treat AI as a magic box. Instead, onboard your tools with your project's specific context. Use project-level rule files, such as .cursorrules, CLAUDE.md, or AGENTS.md, to define your coding standards, architectural patterns, and documentation requirements.

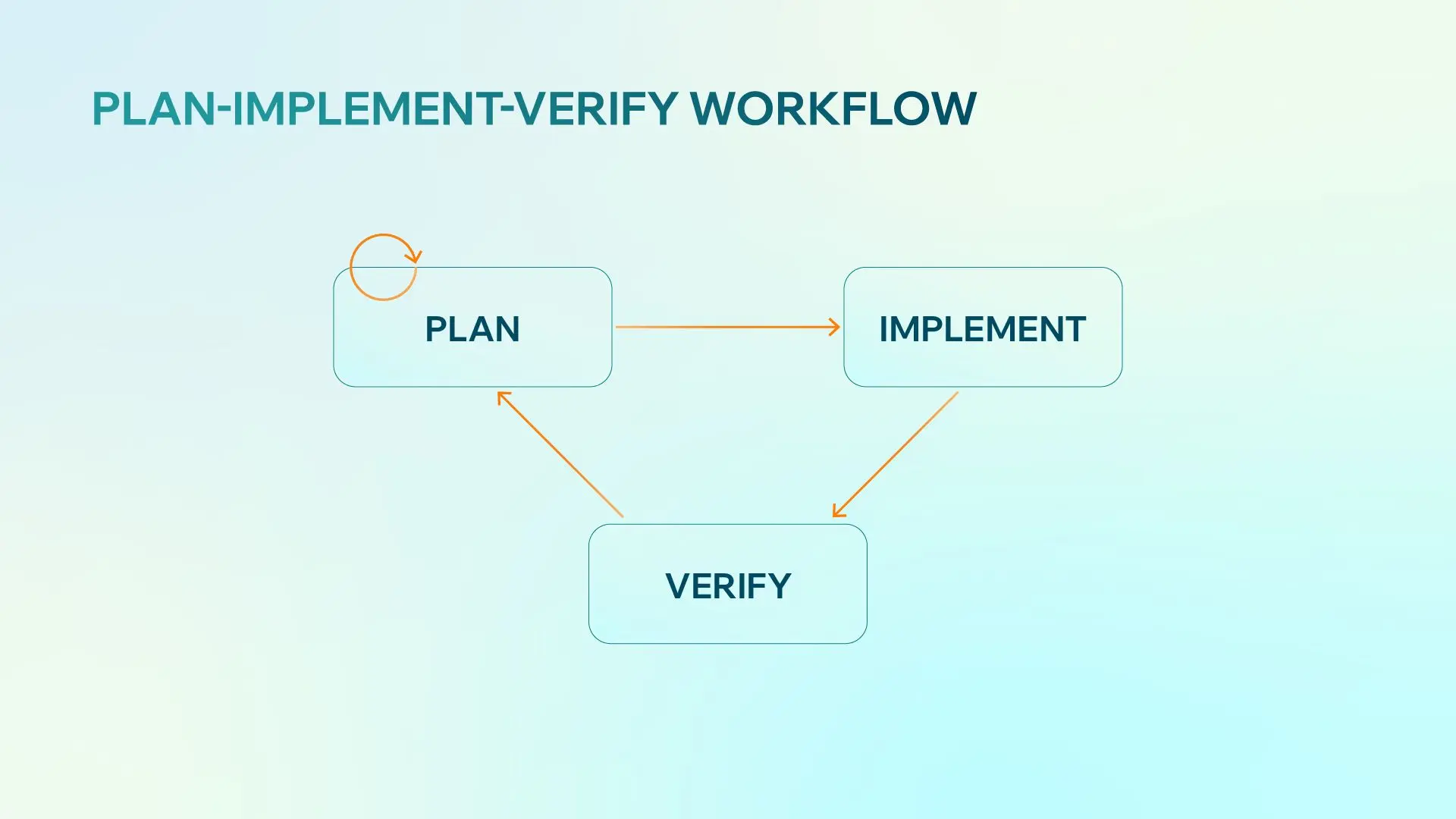

2. The Plan-Implement-Verify Workflow

As AI models evolve, the industry tends to obsess over version numbers. However, in a production environment, the marginal efficiency gain between model versions is secondary. The real game-changer isn't the model you use; it’s the workflow you use to coordinate with it.

The most successful teams prioritize workflow over specific models.

-

Plan: Use Plan Mode to define specifications before any code is written. Task the AI with exploring requirements and identifying edge cases, refining the map together. The golden rule is that zero production code is written until you approve the final blueprint, preventing "code first, think later" technical debt.

-

Implement: Switch to Agent Mode to begin the heavy lifting. The AI works systematically through the codebase, creating files and modifying functions, based strictly on the Phase 1 blueprint. This reduces the risk of the AI going rogue or introducing inconsistent architectural patterns.

-

Verify: While AI can pass unit tests, only a human can verify if the feature solves the business problem. Validate the output against Phase 1 criteria and to create a safe save point, ensuring a clean, human-validated baseline is always available if the AI deviates in the next feature.

3. Automate the Quality Gate

Since manual PR reviews no longer scale, you must shift-left your security and quality checks. Integrate deterministic quality gates, including Static Analysis Security Testing (SAST), linters, and automated test suites, directly into your CI/CD pipeline. This transforms the human role from a code reader to a validator of the automated system.

4. Strengthen Engineering Fundamentals

All of the workflows above rely entirely on your underlying expertise. AI helps us get 70% of the way there, but the final 30% belongs to you.

-

The Paradox of Supervision: To effectively validate an AI's code, you need incredibly strong engineering fundamentals. If you use AI as a crutch to write everything, the exact skills you need to audit that code will start to decay.

-

Trust Judgment Over Tone: AI tools are built to sound persuasive. They will present completely broken logic with absolute, unwavering confidence. Do not let a chatbot’s tone replace your actual engineering judgment.

-

The Ultimate Validator: AI is not replacing the engineer but rather redefining the role. Your job is to take almost-right AI output and transform it into secure, enterprise-grade software.

Conclusion

AI is the newest layer of abstraction in computer science. For organizations to thrive in 2026, the goal is not to produce more code, but to master the toolchains and quality gates that ensure AI-generated output meets the same rigorous standards as human-written code.

By standardizing these tools today, you protect your pipeline from IDEsasters and data leakage while finally delivering on the promise of AI-driven delivery speed.

Leo Le

Technical Lead

Leo is a Technical Lead with twelve years of experience in Golang, Ruby, JS, and frameworks, has led successful projects like EA's Content Hub. He's skilled in DevOps, cloud infrastructure, and Infrastructure as Code (IaC). With a computer science degree and AWS certification, Leo excels in team leadership and technology optimization.